AI is revolutionising information retrieval... but at what price?

Artificial intelligence (AI) search engines promise rapid, synthetic access to information. Gemini, Perplexity, ChatGPT and other generative search tools are positioning themselves as alternatives to traditional search engines. However, a study by Columbia Journalism Review (CJR) revealed a major flaw: these tools struggle to cite their sources correctly. By analysing eight AI engines, the researchers found that they often misattribute news content, sometimes produce non-existent links and rely on syndicated versions of articles, making verification difficult. This study raises a crucial question: how can these tools be integrated into a content strategy without sacrificing the rigour and transparency of the information?

A study on the reliability of quotations from AI engines

CJR's Tow Center for Digital Journalism evaluated eight AI search engines to examine their ability to correctly cite news sources.

Methodology

- 1,600 requests submitted to these tools

- Analysis of 10 articles from 20 different publishers

- Check that citations are accurate (name of article, publisher and correct URL)

The results are worrying, and highlight major shortcomings in sourcing.

Massive errors and unclear sources

Highly variable accuracy

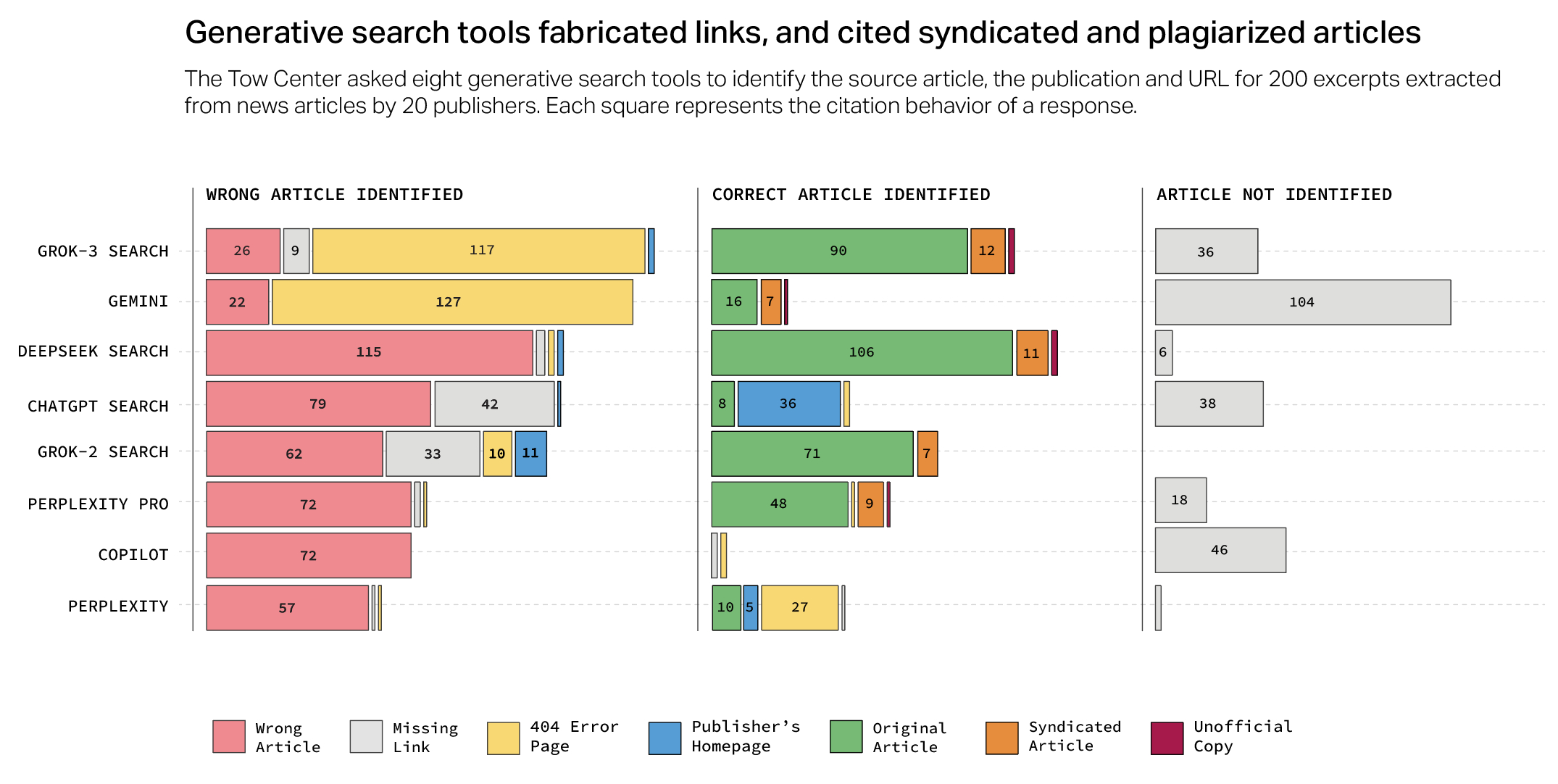

The study reveals that the majority of AI engines tested produce incorrect answers in more than 60 % of cases. Some tools stand out for their blatant lack of reliability:

- Perplexity: 37 % errors

- Google Gemini: 54 % errors

- Grok 3 : 94 % errors

Approximate and sometimes invented quotes

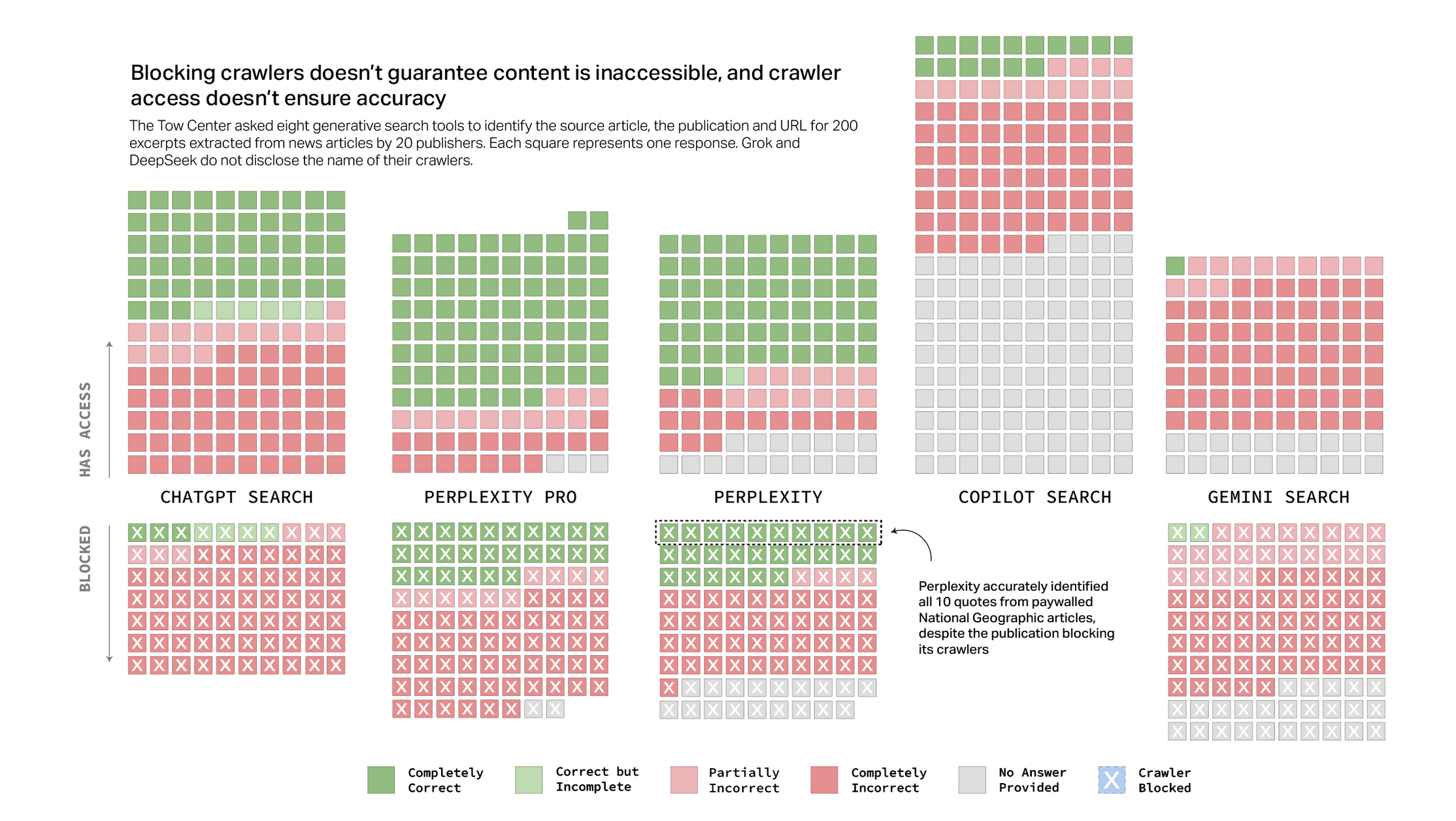

- The engines tested generated incorrect citations and non-existent links.

- Some linked to syndicated versions of articles, making it difficult to identify the original sources.

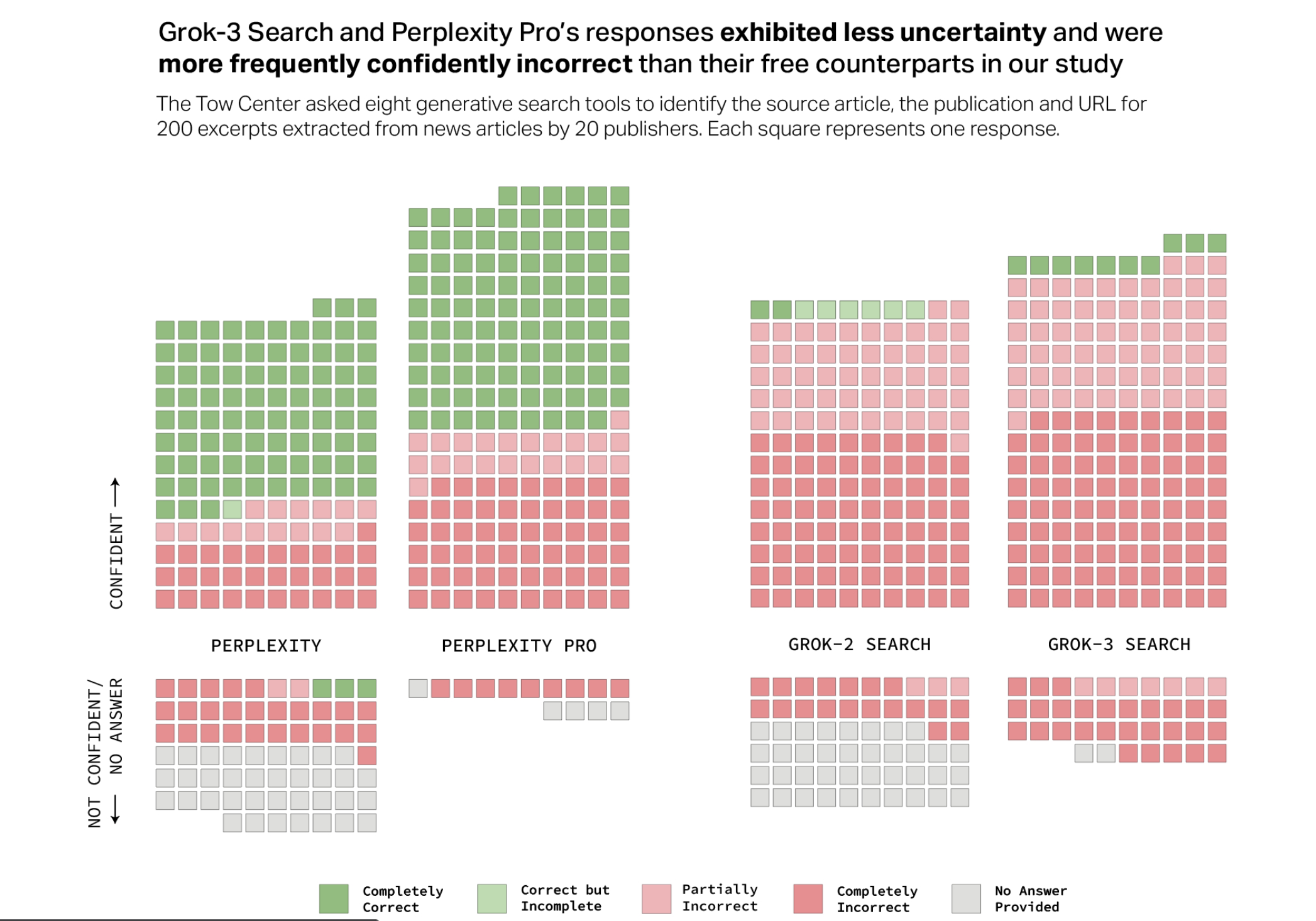

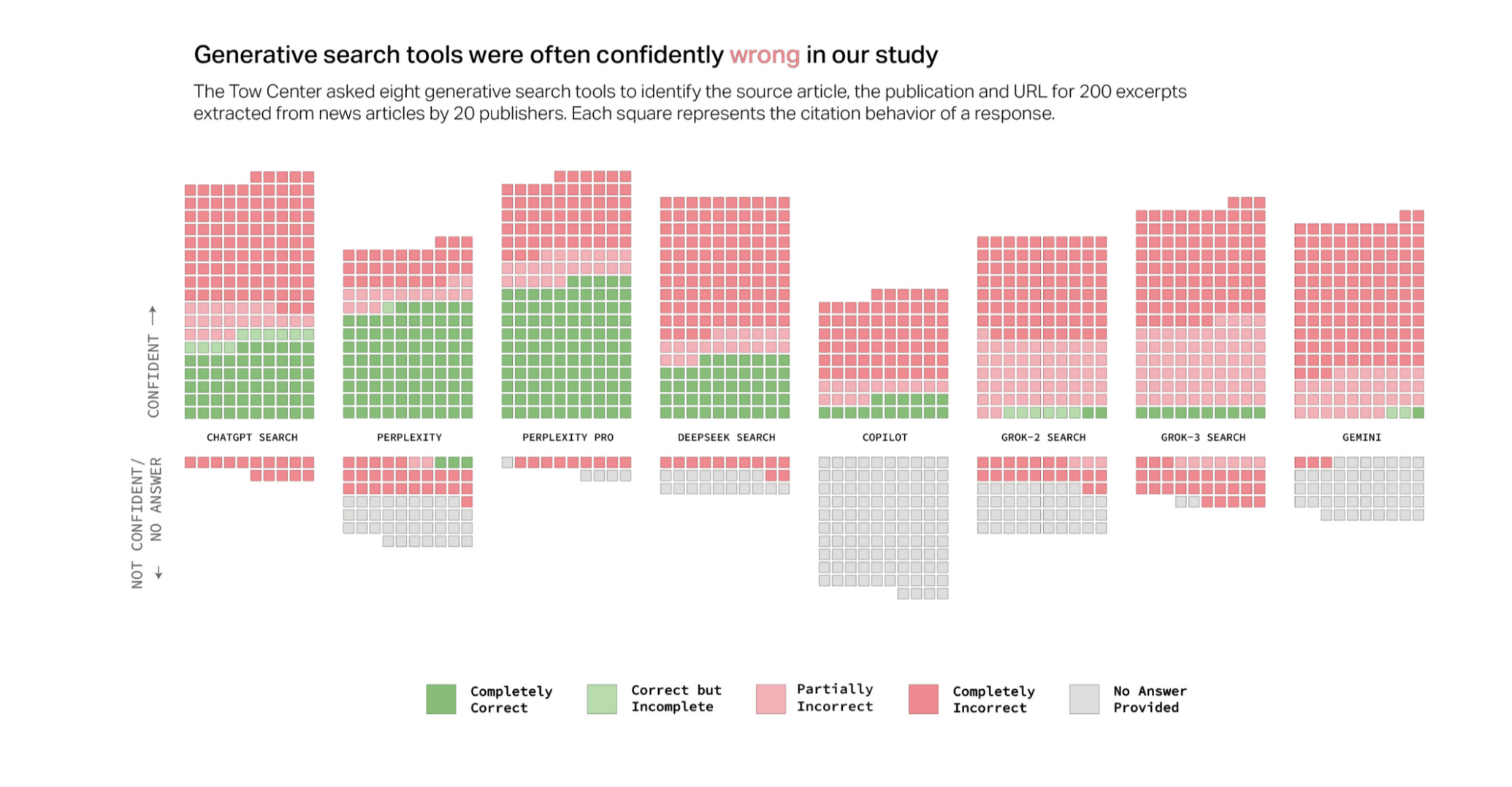

Overconfidence in the answers

- ChatGPT provided 134 incorrect answers out of 200, but only expressed a doubt in 15 cases.

- None of the chatbots tested refused to respond, even when the information was clearly incorrect or impossible to verify.

Why are these gaps a problem?

A direct impact on the credibility of information

If AI engines cannot guarantee correct citation of sources, their reliability as a research tool is called into question. The average user, reluctant to check every reference, runs the risk of absorbing misattributed or truncated information.

A lack of recognition for content publishers

The media and news publishers invest time and resources in producing reliable information. If AI engines exploit this content without properly crediting it, they deprive these players of legitimate traffic and weaken the information ecosystem.

An increased risk of misinformation

When sources are misattributed or quotes are invented, the risk of disseminating false information increases. These errors, amplified by AI, can undermine understanding of current affairs and fuel mistrust of the media.

AI: a powerful but imperfect tool

This study is a reminder of a fundamental principle: AI, no matter how good it is, is not an option, is no substitute for human interpretation and a well thought-out content strategy, in marketing, for example. So you have to separate the wheat from the chaff.

What AI can do for you

- Rapid analysis and efficient synthesis of information

- The ability to process large volumes of data in a short space of time

- A tool to help you find and structure content

What AI does not replace

- Human expertise in assessing and prioritising sources

- The ability to contextualise and verify information

- A well thought-out editorial strategy, based on a solid structure and co-creation with experts, both inside and outside the company.

Conclusion: AI, a lever to be used with discernment

AI search engines offer interesting prospects for accessing information, but their inability to properly cite sources is a major limitation. Blind use of these tools can undermine the credibility of content and fuel misinformation.

AI should be seen as a assistant, and not as a reliable source in its own right. Rigorous human supervision remains essential to guarantee the quality and accuracy of the content. At Nutrimedia, we are convinced that any content strategy must be structured, sourced and validated by experts.

Have you ever noticed misquotes in the answers provided by AI engines? Share your experience in the comments.